By: Morgan Dixon

The Dilemma of Data Fragmentation: Structural Impairments to Civic Intelligence

In the contemporary technological landscape, the promise of Agentic Artificial Intelligence (AI) remains unrealized within the public sector due to significant structural barriers. While private-sector applications utilize generative models to synthesize complex datasets with high velocity, the interaction between citizens and state-level government entities is frequently obstructed by antiquated information architectures.

The United States’ decentralized federalist structure, while foundational to its governance, has resulted in a fragmented digital landscape consisting of 50 distinct state regulatory regimes and thousands of municipal jurisdictions. Over the preceding two decades, government transparency initiatives primarily utilized “Open Data Portals” Web 2.0 repositories characterized by static PDF documents, unstructured CSV files, and isolated dashboards. While sufficient for manual retrieval, these formats are technologically inadequate for the requirements of modern AI reasoning engines.

When Large Language Models (LLMs) are tasked with interpreting complex civic queries, such as analyzing municipal zoning amendments in conjunction with pending state legislation, they must rely on web-scraping techniques. These methods often retrieve data from indirect or obsolete sources. Because LLMs operate via probabilistic text generation, the absence of direct, structured access to authoritative government databases increases the propensity for “hallucinations,” wherein the model produces legally inaccurate information, thereby undermining the reliability of AI for civic engagement.

This “N x M” integration bottleneck represents a significant fiscal and technical impediment. Developers seeking to create comprehensive civic tools must currently engineer unique software connectors for all 50 states. This necessity imposes an “integration tax,” where the costs of mapping divergent data models, such as reconciling disparate state definitions of budgetary metrics, exceed the resources of most civic-tech organizations. Consequently, critical government data remains siloed and inaccessible to automated analysis.

The API Encasement Methodology: Facilitating Federated Interoperability

Traditional policy responses to data fragmentation often favor the consolidation of information into a centralized federal repository. This framework, however, posits that such an approach is both technologically high-risk and constitutionally precarious. The forced migration of state-level data into a singular federal hub would likely incur prohibitive costs and prompt legal challenges under the Tenth Amendment’s anti-commandeering doctrine.

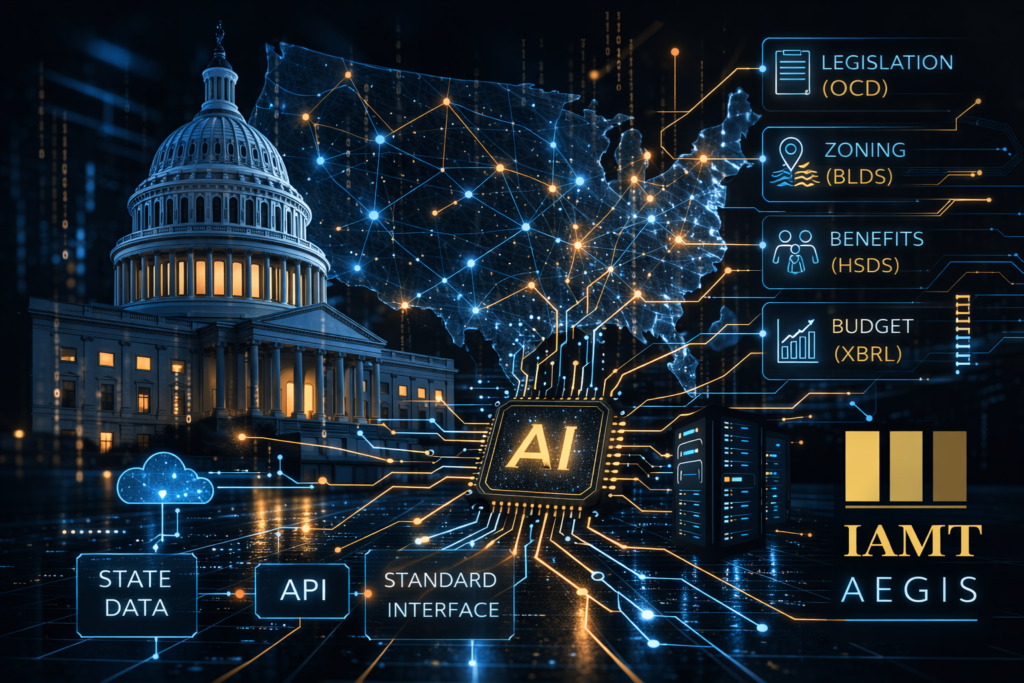

This policy proposes an “API Encasement” or “Leave and Layer” strategy. Under this methodology, states retain their existing legacy infrastructure, including proprietary databases and mainframe systems. Interoperability is achieved by mandating a standardized, read-only API layer that functions as an intermediary. This layer translates standardized federal queries into the legacy system’s native query language, retrieves the relevant data, and returns it in a universal, machine-readable format. To address the inherent risk of hallucinated or imprecise requests from AI agents, the standardized response schema will incorporate a self-correcting feedback mechanism. In instances where a query fails to align perfectly with available data, the API will provide natural language explanations of semantically similar data sources alongside their valid endpoints. This allows the agent to autonomously recalibrate its logic and execute a corrected request without human intervention.

The success of this architecture relies on the adoption of a unified communication standard that facilitates dynamic, two-way interaction between AI reasoning engines and external government data sources. By establishing a universal interface, federal policy creates a framework that major AI laboratories are likely to integrate directly into the architecture of their foundational models. This native support ensures that government databases are treated as authoritative “tools” rather than distal, unstructured information silos.

This standardized ecosystem encourages AI builders to optimize their models for “tool calling”, a capability that allows an LLM to autonomously retrieve real-time data through pre-defined, standardized functions. Consequently, authoritative state information becomes accessible directly within existing consumer and professional chat interfaces, removing the need for intermediary software or manual data entry. To support this at scale, the implementation of Federated API Gateways allows for the aggregation of multi-departmental queries into a single, cohesive request. This architecture mitigates the latency and systemic instability inherent in fragmented legacy environments, ensuring a resilient and responsive civic interface.

The Dividends of Semantic Standardization: From Bureaucratic Friction to Civic Intelligence

The operational success of this framework is contingent upon the adoption of shared semantic ontologies, ensuring that government data is not merely accessible but inherently interpretable by AI reasoning engines. By mandating specialized standards—such as Open Civic Data (OCD) for legislation, the Building & Land Development Specification (BLDS) for zoning, the Human Services Data Specification (HSDS) for benefits, and the eXtensible Business Reporting Language (XBRL) for finance—the federal government can catalyze a series of transformative benefits that extend far beyond technical efficiency.

In the legislative and municipal domains, this transition facilitates a shift from reactive inquiry to proactive analysis. By utilizing machine-readable nodes for bills and zoning ordinances, AI agents can autonomously synthesize disparate state formats into a unified narrative, allowing citizens to track regulatory alignment or assess property development feasibility instantaneously. This replaces the current reliance on non-searchable archives and static maps, which serve as a persistent tax on civic participation.

Beyond mere utility, this semantic integration serves a profound human purpose: the dismantling of the “Administrative Burden.” This theoretical construct encompassing the learning, compliance, and psychological costs of bureaucratic navigation currently acts as an invisible barrier, preventing billions in entitled benefits from reaching those in need. By adopting relational service models, AI assistants can function as virtual caseworkers, cross-referencing individual eligibility with available resources in real-time to dissolve the maze of the modern safety net.

This shift similarly redefines fiscal accountability. By replacing the “paper under glass” of static financial reports with digitally tagged expenditure data, the state invites a new era of radical transparency. Automated audits can transform municipal budgets into verifiable insights, allowing the public to track revenue with surgical precision. Ultimately, this framework marks the end of the labor-intensive Freedom of Information Act (FOIA) cycle, fostering an environment where civic data is open by default and machine-readable by design.

Enforcement and Federal Grant Conditionality

The structural integrity of this mandate is secured through the strategic recalibration of federal fiscal levers. By tethering compliance to the distribution of major federal block grants—spanning infrastructure investments such as BEAD broadband initiatives to essential human services like SNAP and Medicaid—the federal government can ensure a uniform standard of interoperability. This mechanism does not merely incentivize adoption; it establishes digital readiness as a fundamental prerequisite for participating in the modern federalist partnership.

The ascent of Agentic Artificial Intelligence necessitates an urgent and comprehensive reimagining of our national data architecture. Through the implementation of a sophisticated API encasement strategy paired with rigorous semantic protocols, the United States can preserve the constitutional sanctity of state sovereignty while building a transparent, resilient, and machine-readable civic ecosystem. This framework offers a definitive opportunity to transform the friction of bureaucracy into a catalyst for democratic innovation.

About the Author:

Morgan Dixon is an entrepreneur and community leader dedicated to leveraging technology for social good and economic growth. His journey began at age fifteen with a business supporting students with dyslexia and autism, an initiative that later evolved into the founding of Imagination Initiative Inc., a nonprofit focused on providing technology access to low income families across the Pacific Northwest.

Dixon’s early leadership was recognized when he became the youngest two time recipient of Kootenai County’s “30 Under 40” award and received the Pin Wheel Award for child abuse prevention. Through his work with the Innovation Collective and various technology firms, he has launched startups, placed dozens of local professionals into new employment opportunities, and developed advanced machine learning models across multiple domains.

He holds a Bachelor’s degree in Economics and a Master’s in Machine Learning Engineering from Colorado State University, and is currently pursuing a PhD in Computer Science at the University of Idaho. His technical work extends into public health and infrastructure systems, where he serves as a contractor and data scientist for the Oregon Health Authority under a SAMHSA grant supporting evaluation of the 988 data ecosystem.

In both private and public sector engagements, Dixon maintains a strong commitment to his roots in Coeur d’Alene and the broader Pacific Northwest region.